When Intel launched the 5. generation of CPUs, quad-core CPUs were still firmly in the gaming industry’s saddle. So having many cores was very helpful for well scalable tasks, but in games they rarely brought an advantage at that time. A lot has changed since then and more and more developers are also optimizing to use 8 cores and beyond. In order to not run into too much of a GPU limit or test unrealistically at 720p / low, I selected the high preset and a resolution of 1920×1080 in all games. The game selection was more or less based on what I already own and whats somewhat CPU-heavy thanks to an open-world with lots of NPCs as well as interactable detail. So much for my theory, below are the results from the testing.

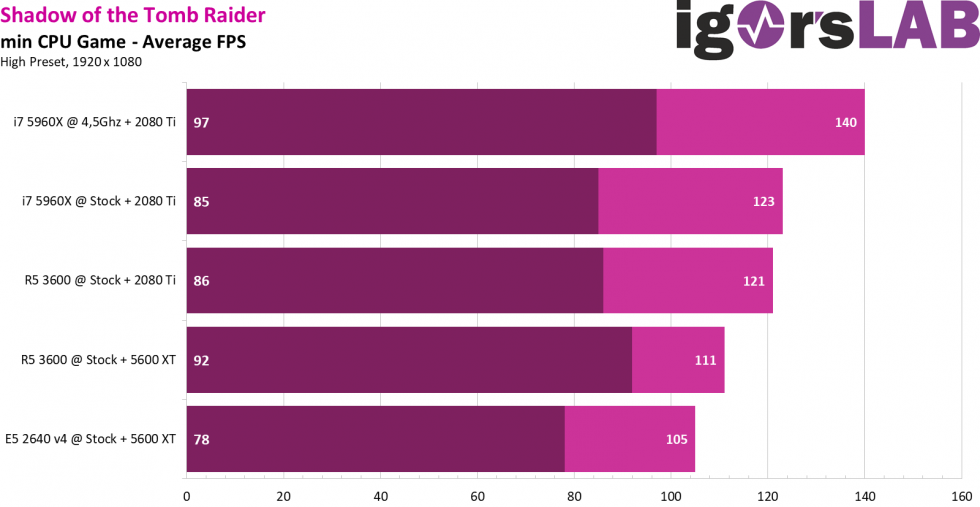

Kicking things off is Shadow of the Tomb Raider (2018), as the built-in benchmark displays a wonderful amount of information:

Thanks to the “GPU Bound” indicator, it shows well here that a combination of aging processors and newer high-end graphics cards can quickly lead to big bottlenecks. With the RTX 2080 Ti, the game or card is practically always waiting for the CPU. With a 5600 XT, which is more in the upper mid-range, the whole thing looks much more balanced. But with just 10 FPS difference using the R5 3600 + 5600 XT, you can definitely save the extra charge for the more expensive card in this title.

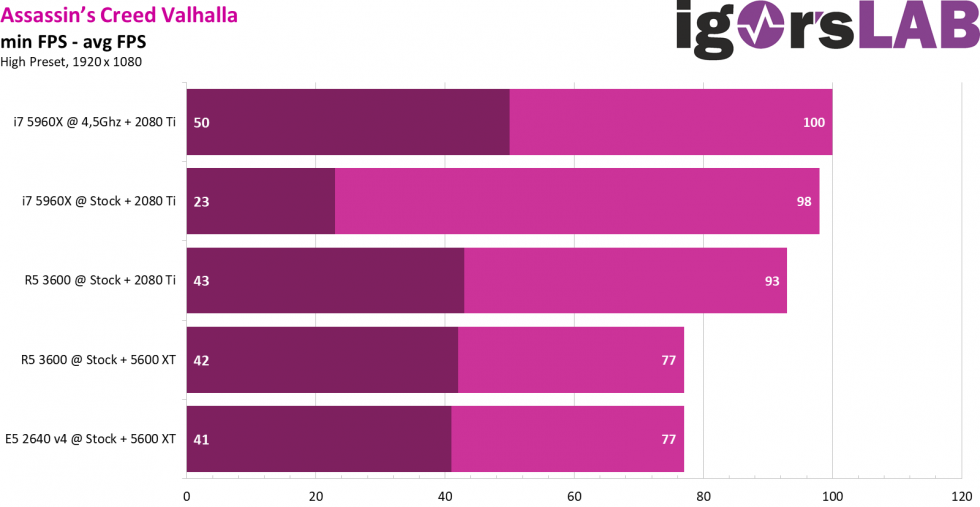

Next up is Assassin’s Creed Valhalla (2020), which is significantly more demanding on the graphics card. While it doesn’t break it down that much, the CPU latency, 1% and 0.1% FPS specs are interesting nonetheless:

The low minimum FPS on the Stock 5960X didn’t disappear even after several runs, but I can’t quite explain it. The game itself ran smoothly for the most part, I didn’t notice any constant suttering or similar.

62 Antworten

Kommentar

Lade neue Kommentare

Moderator

Moderator

Mitglied

Mitglied

Urgestein

Moderator

Urgestein

Urgestein

Mitglied

Urgestein

Urgestein

Urgestein

Veteran

Veteran

Urgestein

Alle Kommentare lesen unter igor´sLAB Community →