I have been asked by some readers why our test results from the launch review were better for the GeForce 3000 series cards, especially in WQHD, than on quite a few other sites. Others, on the other hand, said that everything would have to be re-benchmarked now because the new drivers, which were released at the same time as the GeForce RTX 4090, would make the old GeForce cards look better. The joke is that even before the first benchmark, I decided to completely retest everything as usual and use only one driver for all GeForce cards. This not only saves work in retrospect, but also shows that, although the review includes fewer cards for the reasons mentioned, it was coherent from day one. I also wanted to test the performance of the GeForce RTX 4090 first, so that I could adjust the benchmarks if necessary or even replace them…

Because the 512.90 press driver we used already contains exactly these features for the older cards and it also confirms the hint of some sources in the run-up that data recycling and preparatory work would not be worthwhile this time. That’s exactly why I turned the tables and today, for a change, I’m showing what would have happened if I had given in to convenience or looser time management. I at least ran the GeForce RTX 3090 Ti as the fastest 3000 card through all games again with the older WHQL driver 517.48. On the other hand, since the two new drivers (521.90 Press and 522.25 WQHL) are almost binary-compatible, a plausibility test did not show anything to the contrary either: namely, the results agree completely within the scope of possible measurement tolerances.

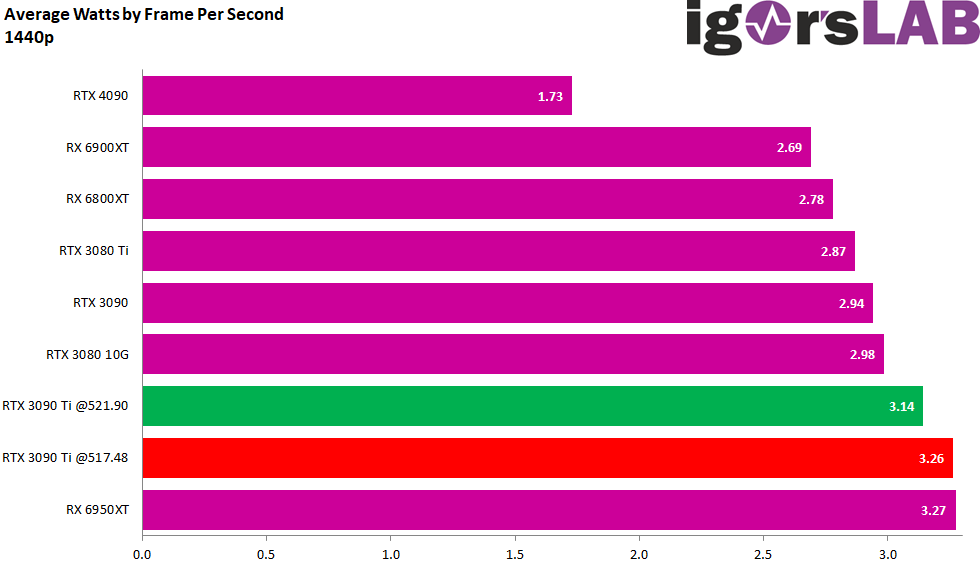

Performance losses of the GeForce RTX 3090 Ti with the old WQHL 517.48 in WQHD (2560 x 1440 pixels)

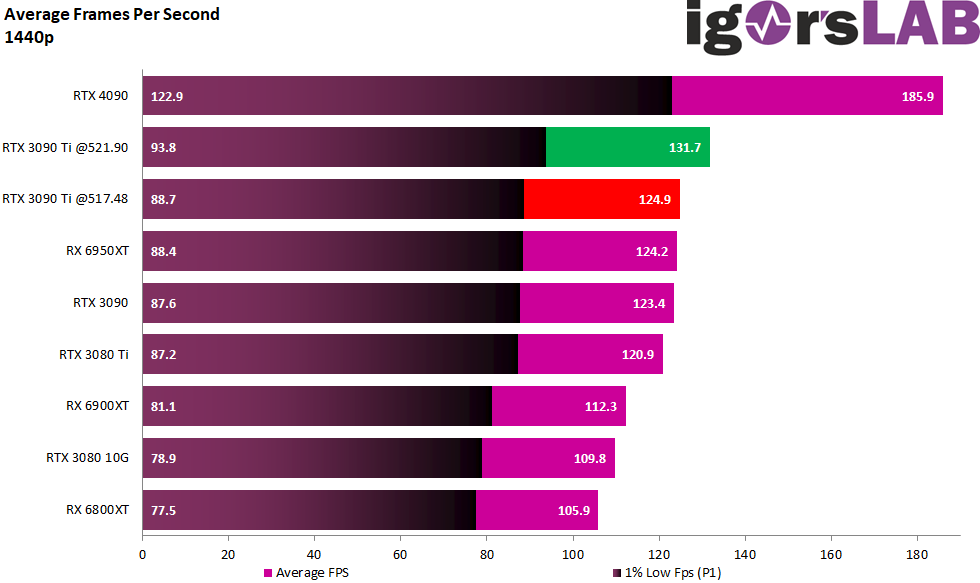

Let’s first take a look at the cumulative FPS and P1 values of the tested cards in direct comparison to the two measurements with GeForce 3090 Ti, where the different drivers were used. The green bars represent the new driver and the benchmark results from my run test, the red bars represent what would have happened if I had used the old drivers. However, not all games from my benchmark suite benefit. If it were up to Far Cry 6 (a bug was also fixed here) and Cyberpunk 2077, it would have probably even lost up to 10 percentage points. I tested the majority of the games with the respective RT options anyway, so I’ll refrain from splitting them into “with” and “without” here.

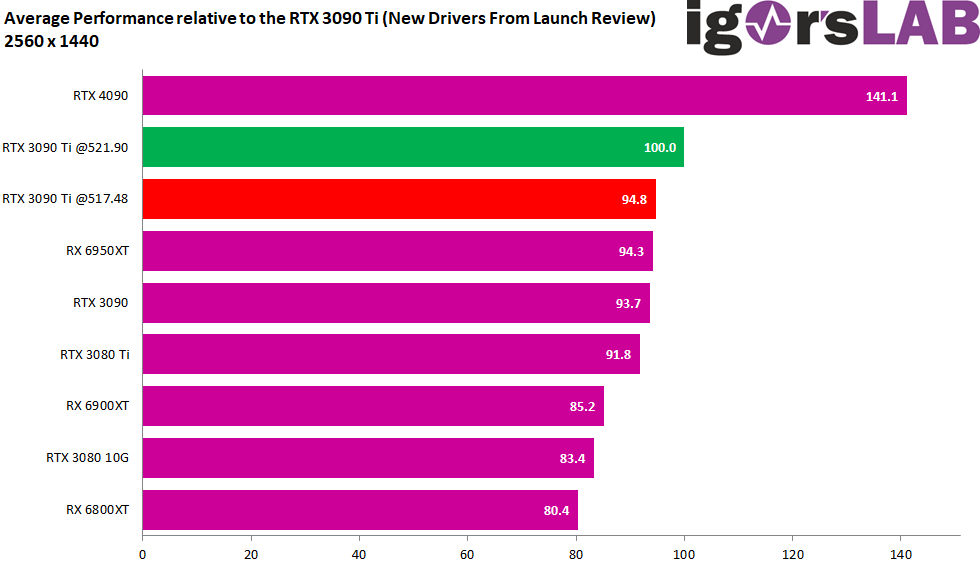

On average, there is a disadvantage of 5.2 percentage points for the older driver, so the difference to the GeForce RTX 4090 would also increase to a whopping 146.3 percentage points. This would really do the Ampere cards an injustice. The reader will therefore have to take a better look at the drivers in some reviews in order to avoid being caught up in the hypetrain.

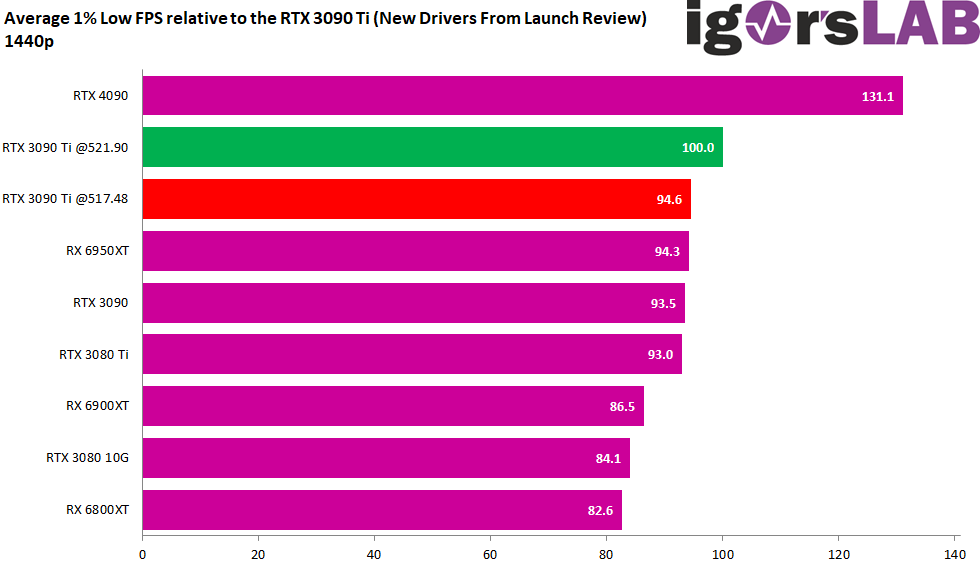

The P1 Low, i.e. the one percent of the worst frames per second, has also changed quite a bit here. A disadvantage of 5.4 percentage points would really do the GeForce RTX 3090 Ti a great injustice. Especially since the advantage of this card over the Radeon RX 6950XT would also disappear and both cards would render de facto equally fast if the old drivers had been used. It also shows very nicely here that the CPU load has dropped significantly. This in turn indicates that the disadvantage of the CPU limit for very fast cards in small resolutions has clearly become none with the new drivers!

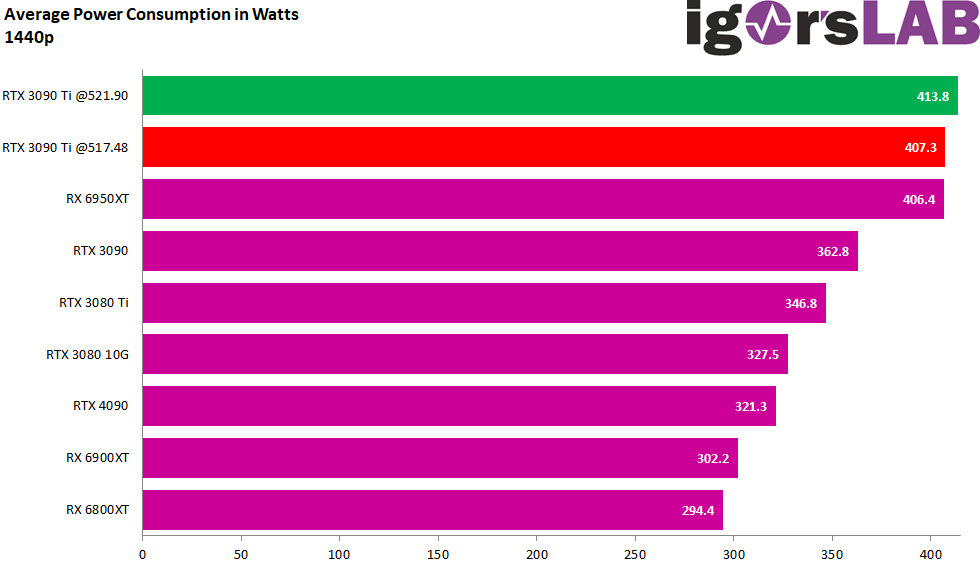

The downside of more gaming performance is of course the slightly increased power consumption. Speed almost never comes for free, and Jensen is not God either. The average power consumption of the new drivers is 6.5 watts, i.e. 1.6 percentage points higher – but this is almost compensated by the CPU’s lower power consumption of 4 watts on average. However, you get a whopping 5.4 percentage points more performance…

The downside of more gaming performance is of course the slightly increased power consumption. Speed almost never comes for free, and Jensen is not God either. The average power consumption of the new drivers is 6.5 watts, i.e. 1.6 percentage points higher – but this is almost compensated by the CPU’s lower power consumption of 4 watts on average. However, you get a whopping 5.4 percentage points more performance…

…which is then also reflected in the efficiency. This is where the old driver has to suffer a lot. The system with the new drivers is also much more efficient not only in terms of graphics cards, but also in the sum of GPU and CPU. Again, the GeForce RTX 3090 Ti has not done anything good with the old drivers.

We can see especially in WQHD that NVIDIA has neatly tweaked the DX12 performance and that the games always benefit when the CPU load also drops because it was previously above average. But what about Ultra HD? Please turn the page once!

We can see especially in WQHD that NVIDIA has neatly tweaked the DX12 performance and that the games always benefit when the CPU load also drops because it was previously above average. But what about Ultra HD? Please turn the page once!

85 Antworten

Kommentar

Lade neue Kommentare

Urgestein

1

Veteran

Urgestein

Mitglied

Mitglied

Urgestein

Mitglied

Urgestein

Urgestein

Veteran

Mitglied

Mitglied

Veteran

Urgestein

Urgestein

Urgestein

1

Alle Kommentare lesen unter igor´sLAB Community →